Most product failures are not glamorous technology meltdowns. They are quiet collapses built on shaky assumptions: that users will care, that the pain is big enough to warrant change, and that the market will adopt something new before inertia wins.

The data reflects it. In an analysis of 431 VC-backed startups that shut down since 2023, CB Insights found “ran out of capital” in 70% of cases — but stressed it is usually the final cause, not the root problem. Poor product–market fit appeared in 43%, bad timing or macro conditions in 29%, and unsustainable unit economics in 19% (founders often cite multiple reasons, so shares exceed 100%). Separately, Atlassian’s State of Product 2026 reports that 84% of product teams worry their current products will not succeed in the market.

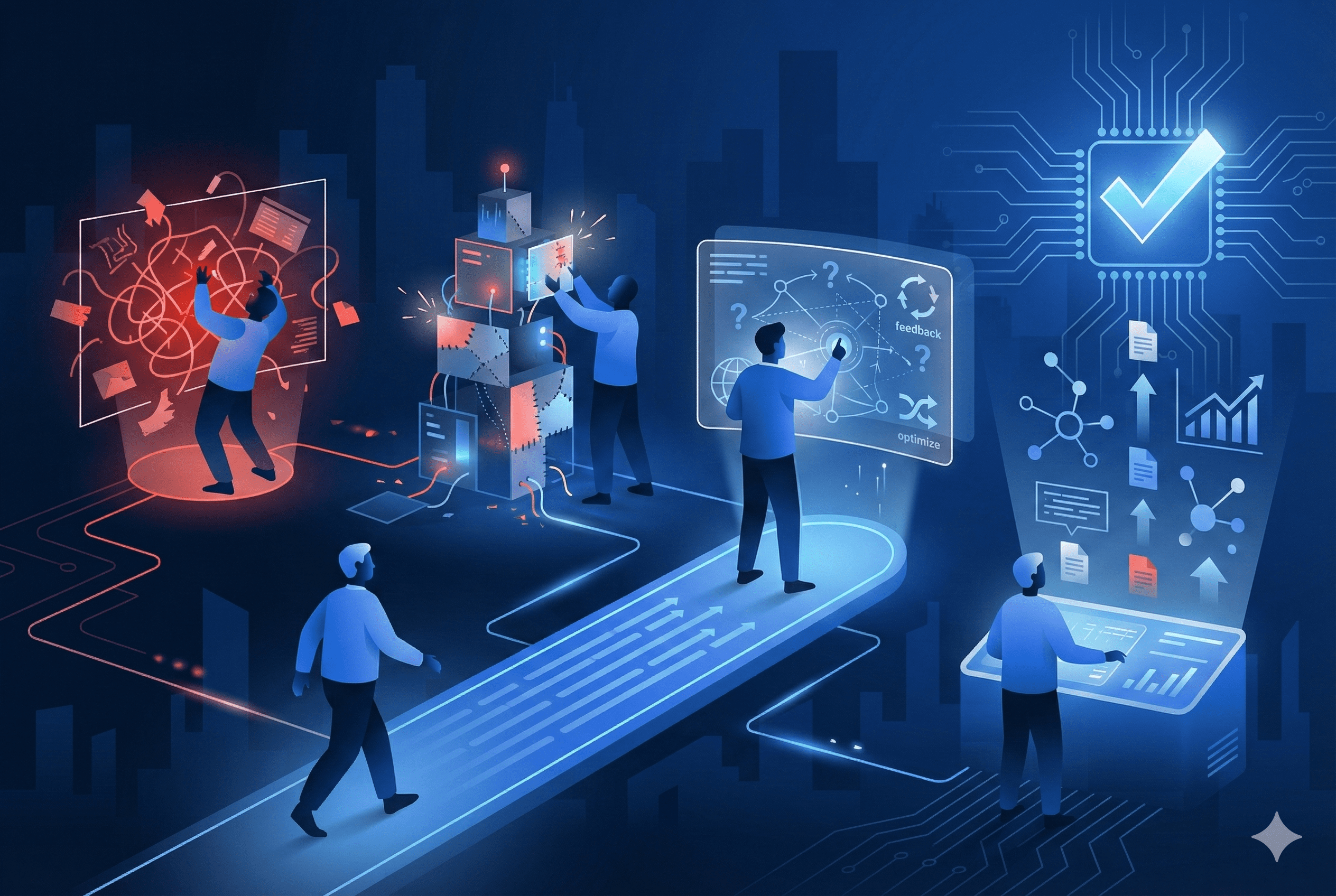

Great products are still not guaranteed, but they are more deliberate when teams apply a small set of fundamentals across discovery, delivery, and go-to-market.

TL;DR:

CB Insights ties 43% of analysed startup failures to poor product–market fit. Atlassian finds 84% of product teams anxious about market success. This guide covers seven fundamentals so teams can align on evidence, not assumptions.

Table of Contents

1. Know your customer

Knowing your customer starts with a grounded picture of who they are and how they actually work, not a persona deck full of demographics. Atlassian notes that 80% of teams still do not involve engineers early; that gap often starts here, when “the customer” lives only in product and sales slides.

CB Insights found 43% of analysed shutdowns cited poor product–market fit, and two-thirds of those PMF failures were early-stage companies that never found a market. In practice, that “never found a market” story rarely starts with code. It starts with a fuzzy picture of the person on the other side of the screen.

This section is about first-pass clarity: the minimum viable understanding that lets your team point at one real human context and say, “we are building for this reality.”

What to capture

- Daily reality: tools, handoffs, meetings, and where time disappears.

- Emotional drivers: what creates stress, fear of looking bad, or urgency to change.

- Decision context: who influences budget, security, procurement, and rollout.

How to build the picture

- Interviews: candid conversations with concrete prompts (“walk me through last Tuesday”).

- Contextual observation: watch people use today’s tools; note workarounds and hacks.

- Diary or lightweight logging: capture frequency and triggers over several days.

- Shadowing: follow the job through the environment — warehouse, clinic floor, home office.

- Empathy mapping: align the team on what users say, think, feel, and do in parallel.

Treat “know your customer” as the evidence base your roadmap should trace back to. Teams that skip this step often discover too late that product–market fit was the thing they never had — and CB Insights attributes 43% of studied failures to exactly that gap.

2. Truly understand your customer

If section one is discovery, this section is behavioural truth and second-pass rigour: what people do, repeat, and pay for — especially after interviews start feeling “done.” Atlassian reports that only 60% of teams make experimentation a regular habit, while 40% do little or none — exactly where “we talked to ten people” quietly turns into false confidence.

Knowing someone’s title and pain keywords is not the same as understanding how strongly behaviour must change for your product to win. The question shifts here. You are no longer asking who the user is. You are asking: under what conditions will they actually switch — and pay?

That is a behavioural question. It shows up in frequency, workarounds, failure modes, and who gets blamed when the old way breaks.

Second-pass research

- Return interviews: same people, new prompts — compare stories week to week for stability.

- “Show me the last time”: force specifics; generic pain language is a red flag.

- Contradiction hunting: where stated priorities conflict with observed behaviour.

- Usage signals (when available): activation, return intervals, drop-off steps — even rough logs beat pure recall.

- Buying archaeology: map rejections, stalled pilots, and “we’ll revisit Q3” patterns.

Questions that expose depth

- Top frustration: what single problem they would fund this quarter if they could.

- Current workaround: the full messy process — including spreadsheets, side chats, and manual checks.

- Rejections: tools tried and abandoned; what broke trust or adoption.

- Influence map: who can veto, who can champion, who bears operational risk.

- Decision path: single owner vs committee; what evidence actually moves the decision.

In real builds, the turning point is rarely more opinions. It is repeatable evidence that behaviour, budgets, and urgency line up — and that evidence only comes from the kind of structured inquiry most teams skip when they feel confident early.

3. Find the value and confirm it

Users rarely pay for features on a spreadsheet. They pay for relief, outcomes, and credible proof that switching is worth the hassle. The job here is to translate ideas into value hypotheses you can invalidate cheaply.

Feature vs core value

- Alleviate pain: time returned, cost removed, risk reduced, errors prevented.

- Justify the switch: the new upside must exceed migration effort and political cost.

Low-risk validation moves

- Pre-sale intent: deposits, LOIs, or paid pilots — money and commitment beat applause.

- Concierge MVP: deliver the outcome manually to learn workflow fit before you scale code.

- Landing + targeted traffic: measure qualified interest, not vanity visits.

- Outbound tests: small, measured outreach with a clear next-step conversion.

- Interactive prototypes: test comprehension and desire before production engineering.

Poor PMF shows up loudly in failure data. CB Insights tags 43% of studied shutdowns with product–market fit issues, which means validation is not a formality. It is how you buy down the risk of building the wrong thing.

4. You are always competing

Your competitor is often inertia, not a logo on a slide. CB Insights highlights bad timing and macro conditions (29%) and unsustainable unit economics (19%) alongside PMF — markets and budgets frequently say no even when the demo sparkles.

You compete with:

- DIY hacks: spreadsheets, email threads, tribal knowledge.

- Partial tools: “good enough” legacy systems stitched together.

- Inaction: the comfort of the current routine.

Mapping competition

- Alternative workflows: journey-map the current process end-to-end.

- Adjacent solutions: free tools, open-source scripts, niche vertical platforms.

- Non-consumption: when doing nothing feels safer than onboarding risk.

When capital runs out, it is tempting to blame fundraising alone — yet CB Insights frames the 70% “ran out of capital” figure as the terminal event, with PMF, timing, and unit economics explaining why runway disappeared. Map why the world says no, not only which vendor you dislike.

5. Differentiate to win

Marginal improvements rarely reset buying committees. Differentiation is a sharp story backed by proof: a capability buyers can repeat in a demo, onboarding conversation, and renewal.

Why “10% better” stalls

- Buyers must rationalise change management, retraining, and risk.

- Incremental gains often fail against inertia (see fundamental 4).

Find your “big win”

- Radical efficiency: hours to minutes on a recurring workflow.

- Unique convenience: automation in a step competitors treat as manual forever.

- Personalised outcomes: recommendations that feel bespoke without extra admin.

Sustain the edge

- Relentless focus: design, onboarding, and marketing repeat the same winning wedge.

- Instrument feedback: measure satisfaction on the differentiator, not generic NPS alone.

- Prove it: case metrics, before/after comparisons, and third-party validation where possible.

Markets reward clarity. When teams cannot articulate why switching is rational, they lose to non-consumption and “good enough” incumbents — the same structural headwinds visible in the CB Insights data on timing, economics, and PMF.

6. Build to your budget

Constraints are not the enemy. Unfunded scope is. Minimum viable scope matches capital, calendar, and learning goals so each release buys the next decision, not just the next feature.

Minimum viable scope

- Ruthless prioritisation: rank by impact on your differentiator and riskiest assumptions.

- Manual where it helps: concierge bridges buy learning cheaper than premature scale.

- Transparent roadmap: honesty earns trust; vagueness invites silent churn.

Financial discipline

- Time-boxed delivery: short cycles with defined outcomes and spend caps.

- Metric gates: tie continued investment to activation, retention, or revenue signals you pre-agree.

- Cost–value reviews: regularly compare burn to validated user value, not roadmap hope.

Atlassian finds nearly half of teams short on time for strategic planning, roadmapping, or data analysis — a recipe for oversized builds. Budget-aware scoping turns “we shipped a lot” into “we learned what matters.”

7. There is no ultimate guide

Frameworks help teams synchronise. They do not replace context. The best teams adapt principles when markets, regulation, or channels shift.

Use the fundamentals to understand why a step exists, then tailor it. Name trade-offs openly — every roadmap choice sacrifices something else. Treat new data as a reason to revise, not a threat to the original plan.

When Atlassian shows 84% market-worry among product teams, the fix is rarely another checklist. It is better loops: customer truth, validation, and a learning cadence that matches your actual risk.

Bringing it all together

These seven fundamentals work as a repeating loop, not a one-off checklist:

- Know your customer — grounded context, shared across disciplines.

- Truly understand your customer — behavioural depth after early confidence.

- Find the value and confirm it — proofs, not promises.

- You are always competing — inertia, hacks, and “good enough” included.

- Differentiate to win — a sharp wedge you can demonstrate.

- Build to your budget — scope that matches learning and runway.

- There is no ultimate guide — adapt the principles as evidence changes.

The thread running through all seven is clarity: about users, value, competition, and trade-offs. Products that launch, endure, and scale are rarely the most technically impressive ones. They are the ones whose teams were honest about what they did not yet know — and disciplined enough to find out before spending the budget.

Where shutdown stories cluster (illustrative)

The chart below uses percentages reported by CB Insights for 385 companies with identifiable failure reasons. Totals exceed 100% because founders often cited multiple causes.

Top cited startup failure themes (CB Insights) Horizontal bars for ran out of capital 70 percent, poor product market fit 43 percent, bad timing 29 percent, unsustainable unit economics 19 percent. Why startups failed (selected reasons) Source: CB Insights analysis; multiple reasons per company possible. Ran out of capital 70% Poor product–market fit 43% Bad timing / macro 29% Unsustainable unit economics 19% Figure 1. Selected failure themes from CB Insights (analysis of post-2023 shutdowns; shares can sum above 100%).

Want to apply these fundamentals to a real product decision?

Talk to Polymorph — we work with founders, product leads, and executive teams to validate, build, and improve software that earns its place in the business.

Knowing your customer is first-pass context: roles, environment, workflows, and stakeholders. Truly understanding your customer is second-pass behavioural rigour — frequency, workarounds, contradictions between what people say and do, and the conditions under which they will switch. Atlassian highlights that 40% of teams do little or no experimentation; this section is where you stress-test early certainty.

Not typically as the headline story. CB Insights finds 70% of analysed shutdowns involved running out of capital — but emphasises that PMF (43%), timing (29%), and economics (19%) explain why runway ended. The lesson: validate market and behaviour, not only engineering risk.

Enough to invalidate your riskiest assumptions cheaply: evidence of willingness to pay or adopt, clarity on the incumbent workflow you must displace, and a differentiated wedge you can demonstrate. Atlassian notes only 60% of teams practise regular experimentation — treat learning cadence as a discipline, not a workshop.

Anyone or anything that fills the job today: spreadsheets, manual processes, partial tools, or inertia. Mapping those honestly prevents surprise losses to "no decision."

No. Use it as a lens you revisit across discovery, delivery, and scaling — adapting to your market, regulation, and channel reality while keeping clarity on users, value, and competition.